Intro

A pull request gets merged at 4:42 PM.

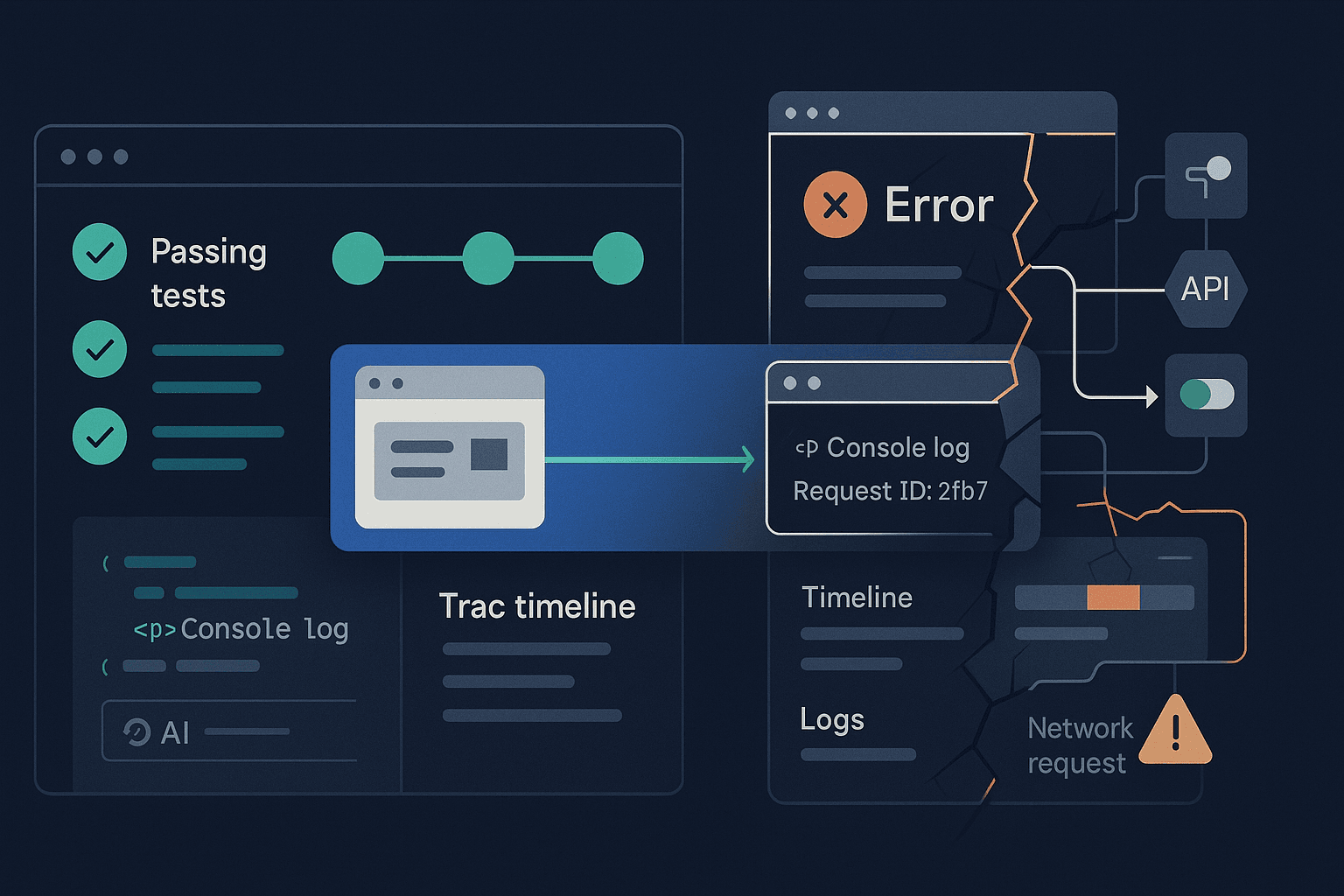

CI is green. Unit tests passed. Type checks passed. Lint passed. Playwright smoke tests passed. The release rolls out behind a feature flag, then to 10%, then 100%.

At 5:17 PM, support starts getting screenshots.

Users can’t complete checkout on Safari. A form submit button spins forever on slow networks. The backend says requests are fine, but frontend telemetry shows a spike in abandoned sessions. Engineering opens the logs and sees no obvious 500s. The database is healthy. Infrastructure dashboards look boring. Meanwhile revenue is quietly leaking.

This is not an edge case anymore. It is the default failure mode of modern software teams.

We now ship faster, with more abstraction, more generated code, more micro-decisions hidden inside frameworks, SDKs, feature flags, and AI-assisted edits. Teams often have more tests than ever, yet less confidence in whether real user workflows actually work.

That mismatch matters because the bottleneck in software delivery is no longer only writing code. It is verifying behavior across the messy boundary where browsers, networks, APIs, auth, state, third-party services, and human expectations collide.

The old model said: write code, write tests, run CI, ship.

The new reality is harsher: code can be syntactically correct, locally tested, and CI-approved while still failing at the exact moment a user tries to accomplish something important.

This article is about that gap.

More specifically, it is about why traditional testing and debugging approaches increasingly fail in AI-assisted development workflows, what teams are missing when they optimize for test counts instead of workflow reliability, and how to build a debugging and testing system that reflects how software actually breaks in production.

If you build web apps, APIs, developer tools, SaaS products, or internal platforms, this is the shift worth understanding: reliability comes less from proving isolated code paths and more from observing and validating end-to-end user intent.

The Problem: Passing Pipelines, Broken Workflows

Most teams do not ship broken software because they do not care about quality. They ship broken workflows because the systems they use to validate quality are optimized for the wrong unit of truth.

The unit of truth is rarely a function. It is rarely even a component.

It is a workflow:

- a user signs in with Google

- lands on a dashboard

- loads server-side data

- modifies filters

- uploads a CSV

- waits for a background job

- reviews results

- exports a report

- shares it with a teammate

That entire path can fail even when every individual layer appears healthy.

How this shows up in real teams

Here are common examples:

-

Frontend/backend contract drift

A backend field changes fromtotal_counttototalCount. Type generation is stale in one package. Unit tests mock the old shape, so they still pass. Production users get an empty state instead of results. -

Auth edge cases hidden by mocks

Local tests use stubbed auth tokens. Real sessions in production expire mid-flow or behave differently across tabs. Users hit silent refresh failures that no test suite exercised. -

Browser-specific race conditions

React state updates and network retries behave fine in Chromium in CI, but Safari timing exposes a bug in optimistic UI rollback. -

Feature flags causing untested branches

CI validates the default flag path. A percentage rollout combines a new frontend state machine with an old API response shape. Nobody tested that combination. -

Third-party dependency instability

Stripe, Auth0, S3, or a CRM integration returns slower responses or shape changes. Your app does not crash, but it deadlocks a key workflow. -

AI-generated code that is plausible but incomplete

A coding assistant adds a loading state, retries, and form validation. It looks polished. But it misses cancellation semantics, double-submit handling, and real error mapping from the API.

None of these failures are unusual. They are normal consequences of distributed systems, frontend complexity, and accelerated development.

Why this problem is getting worse now

AI-assisted development changes the economics of code production. Teams can create more code, more refactors, more tests, and more features in less time.

But code volume is not the same as system understanding.

When engineers generate or heavily autocomplete implementation details, they often review for plausibility rather than deeply reconstructing all runtime assumptions. This is not negligence; it is a throughput adaptation. The problem is that plausible code often passes narrow tests while failing in integration.

In other words:

- AI can reduce time to implementation

- it does not automatically reduce uncertainty about behavior

- in some teams, it increases uncertainty by expanding the amount of code that exists but has not been meaningfully exercised

That means debugging and testing systems must become more workflow-aware, more observability-driven, and less reliant on green CI as the final confidence signal.

Why Current Approaches Fail

A lot of engineering process still assumes that enough lower-level correctness aggregates into product reliability. Sometimes it does. Often it does not.

Let’s examine where common approaches fall short.

Unit tests are necessary but structurally limited

Unit tests are good at verifying deterministic logic, edge-case branching, parsing, transformations, and pure business rules.

They are bad at proving that real systems composed of routers, network timing, auth, browser behavior, server rendering, feature flags, and third-party dependencies work together correctly.

Consider this JavaScript example:

jsexport function getCheckoutButtonState({ cart, inventory, paymentReady }) { if (!cart?.items?.length) return 'disabled'; if (!inventory) return 'loading'; if (!paymentReady) return 'disabled'; return 'enabled'; }

A unit test suite for this can be perfect:

jsimport { describe, it, expect } from 'vitest'; import { getCheckoutButtonState } from './checkout'; describe('getCheckoutButtonState', () => { it('disables when cart is empty', () => { expect(getCheckoutButtonState({ cart: { items: [] }, inventory: true, paymentReady: true })).toBe('disabled'); }); it('shows loading when inventory is not loaded', () => { expect(getCheckoutButtonState({ cart: { items: [1] }, inventory: false, paymentReady: true })).toBe('loading'); }); it('enables when ready', () => { expect(getCheckoutButtonState({ cart: { items: [1] }, inventory: true, paymentReady: true })).toBe('enabled'); }); });

That does not tell you whether:

- the inventory request is actually retried correctly

- the UI updates after a race between cart refresh and payment SDK load

- the submit handler is idempotent

- Safari blocks a payment popup

- a feature flag changes when

paymentReadyis ever reached

The unit test passed. The workflow can still fail.

Integration tests often rely on idealized mocks

Integration tests are useful, but many teams over-mock them until they become only slightly larger unit tests.

A React component test might assert that clicking “Save” calls a mocked mutation and shows a success toast. In production, the mutation may resolve after a route transition, and the toast container may unmount. The actual user sees nothing and clicks again, creating duplicate writes.

Example:

jsxit('shows success toast after save', async () => { const saveProfile = vi.fn().mockResolvedValue({ ok: true }); render(<ProfileForm saveProfile={saveProfile} />); await userEvent.type(screen.getByLabelText(/name/i), 'Ava'); await userEvent.click(screen.getByRole('button', { name: /save/i })); expect(saveProfile).toHaveBeenCalled(); expect(await screen.findByText(/saved/i)).toBeInTheDocument(); });

Helpful, yes. Sufficient, no.

CI/CD gives binary answers to non-binary questions

CI answers: did the checks complete successfully in a controlled environment?

It does not answer:

- will this code work across real browsers, regions, latencies, and auth states?

- does this PR preserve the business-critical path?

- what changed in runtime behavior compared to the previous build?

- which user journeys are now riskier?

Many teams read green CI as “safe to ship.” In practice, green CI often means “nothing failed under current test assumptions.” That is a much narrower claim.

QA is often trapped in manual, late-stage validation

Manual QA still matters, especially for exploratory testing. But many organizations use QA as a catch-all safety net for things engineering could have made observable earlier.

That creates predictable problems:

- testing happens late

- environments drift from production

- repro steps are incomplete

- failures are hard to localize

- fixes are validated by clicking around, not by creating durable coverage

If a bug only appears under a sequence of state transitions, manual verification may find it once and never robustly encode that knowledge.

Logs and metrics are not enough for frontend-heavy systems

Backend engineers often reach for logs, traces, and metrics first. Those are essential, but frontend failures frequently look invisible from the server side.

Examples:

- a request was never sent because the UI deadlocked

- the wrong request was sent because stale state leaked into a mutation

- a request succeeded but the UI never transitioned

- a browser event handler fired twice

- hydration mismatch caused a form to reset unexpectedly

From the API’s perspective, nothing is necessarily wrong. From the user’s perspective, the product is broken.

The Core Insight: Test and Debug User Intent, Not Just Code Paths

The shift is this: stop treating quality as a property proven primarily by source-level correctness, and start treating it as a property demonstrated by workflow reliability.

That means the most important question is not:

Did we test the function?

It is:

Can a real user complete the intended task under realistic conditions, and if not, can we see exactly where the workflow broke?

This sounds obvious, but it changes how you design testing, CI, observability, and debugging.

What workflow reliability looks like

A workflow-reliable system has these properties:

-

Critical user journeys are explicitly identified

Not everything gets the same validation depth. Login, checkout, onboarding, document creation, billing changes, and deployment flows matter more than low-risk UI details. -

End-to-end behavior is exercised in realistic environments

Real browsers. Real rendering. Preferably real APIs for some paths. Controlled stubs where necessary, but not everywhere. -

Failures produce artifacts useful for debugging

Traces, screenshots, videos, network logs, console errors, server logs correlated by request IDs. -

Tests encode workflows, not implementation details

Assert user-visible outcomes and state transitions, not component internals. -

Production signals are tied back to development workflows

Errors seen in production become reproducible test cases and CI checks, not just incident notes. -

AI-generated changes face behavior-level verification

If code is produced faster, validation must happen closer to the user experience.

Practical Example: Debugging a Real Checkout Failure

Let’s walk through a realistic scenario in a Next.js app using a backend API and Playwright.

The bug report

Users occasionally click “Place Order” and nothing happens. Support says it’s intermittent. Backend logs show some successful orders and no obvious spike in 500s.

The actual root cause

The frontend disables the button while submitting, but under one error path it never resets isSubmitting after a timed-out inventory check.

Here is the code:

tsximport { useState } from 'react'; export function CheckoutButton({ cartId }: { cartId: string }) { const [isSubmitting, setIsSubmitting] = useState(false); const [error, setError] = useState<string | null>(null); async function handleSubmit() { setIsSubmitting(true); setError(null); try { const inventoryRes = await fetch(`/api/inventory/validate?cartId=${cartId}`); if (!inventoryRes.ok) { throw new Error('Inventory validation failed'); } const orderRes = await fetch('/api/orders', { method: 'POST', headers: { 'Content-Type': 'application/json' }, body: JSON.stringify({ cartId }), }); if (!orderRes.ok) { throw new Error('Order creation failed'); } window.location.href = '/order/success'; } catch (err) { setError(err instanceof Error ? err.message : 'Unknown error'); // bug: forgot setIsSubmitting(false) } } return ( <div> <button disabled={isSubmitting} onClick={handleSubmit}> {isSubmitting ? 'Processing...' : 'Place Order'} </button> {error && <p role="alert">{error}</p>} </div> ); }

A component test might catch some of this, but an end-to-end test reveals the user-visible failure much better.

Playwright test that catches the workflow failure

tsimport { test, expect } from '@playwright/test'; test('user can recover from inventory validation failure and retry checkout', async ({ page }) => { await page.route('**/api/inventory/validate**', async route => { await route.fulfill({ status: 504, contentType: 'application/json', body: JSON.stringify({ error: 'Gateway Timeout' }), }); }); await page.goto('/checkout'); await page.getByRole('button', { name: /place order/i }).click(); await expect(page.getByRole('alert')).toContainText('Inventory validation failed'); const button = page.getByRole('button', { name: /processing|place order/i }); await expect(button).toBeEnabled(); });

That test encodes a real user expectation: after a transient failure, the workflow should remain recoverable.

Why this test is more valuable than it looks

This is not just testing a button. It is testing:

- network failure handling

- state reset logic

- user recoverability

- a business-critical workflow

That is the kind of test that prevents real incidents.

Debugging with Playwright traces

When this fails in CI, Playwright traces can dramatically reduce time to diagnosis.

Enable traces in playwright.config.ts:

tsimport { defineConfig } from '@playwright/test'; export default defineConfig({ use: { trace: 'retain-on-failure', screenshot: 'only-on-failure', video: 'retain-on-failure', }, });

Then inspect:

- network timeline

- console errors

- DOM snapshots before and after click

- action sequence timing

This is the difference between “cannot reproduce” and “the button never re-enabled because the catch path skipped a state reset.”

Building a Better Testing Pyramid for Modern Apps

The classic testing pyramid still has value, but teams should reinterpret it.

Instead of thinking only in terms of unit > integration > E2E volume, think in terms of behavior confidence per layer.

Suggested distribution

1. Unit tests for deterministic logic

Use them for:

- pricing calculations

- permission rules

- data transformation

- parser behavior

- reducers/state machines

Python example for business rule validation:

pythondef calculate_discount(user_tier: str, total_cents: int) -> int: if total_cents < 5000: return 0 if user_tier == 'pro': return int(total_cents * 0.15) if user_tier == 'team': return int(total_cents * 0.10) return int(total_cents * 0.05)

These should be fast and abundant where logic is dense.

2. Integration tests for contracts and state transitions

Use them for:

- API handlers with database interactions

- component + state + network layers

- auth middleware behavior

- background job orchestration

Focus on realistic data and fewer mocks.

3. End-to-end tests for critical workflows

Use E2E sparingly but seriously. Not hundreds of flaky vanity tests. A curated set of business-critical journeys:

- sign up

- sign in

- onboarding completion

- purchase/checkout

- core CRUD flow

- export/share flow

- billing updates

- permission-sensitive actions

The question is not “can we E2E test everything?” It is “which failures would actually hurt users or revenue?”

Tool Comparisons: What to Use and When

Playwright vs Cypress for browser automation

Playwright strengths:

- strong multi-browser support

- excellent tracing/debugging artifacts

- multiple tabs, contexts, permissions, network controls

- good fit for modern CI and parallelization

- better coverage when browser-specific behavior matters

Cypress strengths:

- approachable developer experience

- strong interactive runner

- large ecosystem and familiarity

- good for teams already invested in it

If you care deeply about workflow reliability across browsers and want better debugging artifacts, Playwright is often the stronger default.

Jest/Vitest vs browser-level validation

Use Jest or Vitest for:

- pure logic

- server utilities

- fast feedback

- targeted component logic

Do not expect them to prove browser runtime correctness. They are not substitutes for real browser execution.

Postman/Newman vs application-level contract tests

Postman collections are useful for API exploration and smoke checks. But they often stop short of full system confidence because they bypass frontend state, auth UX, and browser behavior.

Use them for API checks, not as a replacement for user workflow testing.

OpenTelemetry, logs, and session replay

These tools answer different questions:

- logs: what happened on the server?

- metrics: how often and how severe?

- traces: where did latency or failure propagate?

- session replay: what did the user actually experience?

If your app is frontend-heavy, combining backend observability with session replay and browser test traces gives much more complete debugging coverage than any one signal alone.

CI/CD That Reflects Real Risk

Most pipelines treat every PR similarly. That is easy to operate but wasteful and misleading.

A better model is risk-based validation.

Example CI strategy

For every PR:

- lint

- typecheck

- unit tests

- targeted integration tests

- changed-area Playwright smoke tests

For high-risk changes, also run:

- full critical-path E2E suite

- contract tests against staging APIs

- visual regression for key pages

- cross-browser runs

For deploys:

- synthetic checks against production or preview env

- canary release validation

- rollback criteria tied to user journey failures, not just CPU or 500s

GitHub Actions example

yamlname: ci on: pull_request: push: branches: [main] jobs: validate: runs-on: ubuntu-latest steps: - uses: actions/checkout@v4 - uses: actions/setup-node@v4 with: node-version: 20 - run: npm ci - run: npm run lint - run: npm run typecheck - run: npm run test:unit - run: npm run test:integration - run: npx playwright test tests/smoke critical-e2e: if: contains(github.event.pull_request.labels.*.name, 'high-risk') runs-on: ubuntu-latest steps: - uses: actions/checkout@v4 - uses: actions/setup-node@v4 with: node-version: 20 - run: npm ci - run: npx playwright install --with-deps - run: npx playwright test tests/critical

This is not perfect, but it is already better than pretending all changes deserve identical validation.

Debugging Techniques That Actually Reduce Time to Resolution

When workflow failures happen, speed of diagnosis matters as much as prevention.

1. Correlate frontend and backend with request IDs

Generate a request or session correlation ID in the client and pass it through API calls.

tsconst correlationId = crypto.randomUUID(); await fetch('/api/orders', { method: 'POST', headers: { 'Content-Type': 'application/json', 'X-Correlation-ID': correlationId, }, body: JSON.stringify({ cartId }), });

Then log it server-side. This makes “what happened to this user?” answerable.

2. Preserve failure artifacts automatically

For failed browser tests, keep:

- screenshot

- trace

- video

- console logs

- network HAR if useful

Do not force engineers to rerun flaky scenarios locally before they can even inspect them.

3. Reproduce with network shaping

Many bugs only appear under realistic latency or partial failures.

Examples to simulate:

- slow 3G

- API timeout on the second request

- partial page load

- dropped websocket reconnect

- expired auth token during mutation

4. Turn incidents into tests

The best test suites are often built from previous failures.

If a production outage occurred because a user changed billing plans with an expired session and got stuck in a redirect loop, encode that exact sequence in an automated test.

This creates organizational memory.

5. Test retries and recovery, not only success

A lot of teams heavily test happy paths and barely test recovery paths.

But real reliability depends on:

- retries not duplicating writes

- spinners resolving

- buttons re-enabling

- stale data being invalidated

- users seeing actionable errors

- workflows allowing safe retry or rollback

Best Practices for Teams Shipping Fast with AI Assistance

These practices matter even more when AI increases code throughput.

Define critical workflows explicitly

Write them down. Name them. Version them if needed.

Examples:

- new user creates workspace

- admin invites teammate

- customer upgrades subscription

- analyst uploads CSV and exports results

If a workflow matters to the business, it should have an owner and automated validation.

Review AI-generated code for runtime assumptions

When reviewing generated code, ask:

- what happens if this request times out?

- is this action idempotent?

- what if the response shape changes slightly?

- what if this component unmounts before the promise resolves?

- how does this behave under double-clicks or retries?

This is where many “looks good to me” reviews fail.

Prefer fewer, stronger end-to-end tests

A brittle forest of UI tests is not maturity. It is noise.

Aim for a compact suite of high-value workflow tests that:

- map to real business outcomes

- are observable when they fail

- run consistently in CI

- are maintained like product infrastructure

Use production telemetry to prioritize coverage

If 40% of support tickets come from onboarding and billing, that is where deeper E2E coverage belongs.

Testing strategy should follow user pain and business risk, not team habit.

Separate confidence signals

Not all green checks mean the same thing. Distinguish between:

- code quality checks

- logic correctness

- contract compatibility

- workflow reliability

- production health

This helps decision-makers understand what is actually safe.

Invest in preview environments

Testing workflows in ephemeral or preview environments linked to PRs makes debugging dramatically easier, especially for frontend changes and full-stack interactions.

A senior engineer’s productivity often depends less on writing code faster and more on shortening the loop between suspected bug and trustworthy reproduction.

A More Honest Model of Software Quality

Many teams still operate with an implicit fantasy: if enough tests pass, quality emerges.

A more honest model is this:

- software fails at boundaries

- modern apps have more boundaries than ever

- generated code increases surface area faster than understanding

- CI is a filter, not a guarantee

- reliability comes from validating and observing user workflows under realistic conditions

That does not mean unit tests are obsolete. They are not.

It means they occupy a narrower place in the confidence stack than many organizations admit.

A senior engineering team should be able to answer:

- what are our highest-risk user journeys?

- how are they validated before deploy?

- what artifacts exist when they fail?

- how quickly can we reproduce production issues?

- which classes of incidents are currently invisible to our pipeline?

If those answers are vague, the problem is not lack of effort. It is that the testing and debugging system is optimized for code correctness instead of workflow reliability.

Conclusion

The uncomfortable truth of modern software development is that passing tests and green CI often describe only a small part of reality.

In AI-assisted development, this gap gets wider. Teams can generate and ship more code, but the real challenge is proving that users can still accomplish what they came to do.

The path forward is not “more tests” in the abstract. It is better alignment between validation and user intent.

Test business-critical workflows in real browsers. Design CI around risk, not ritual. Preserve artifacts that make failures diagnosable. Use observability to connect user pain to reproducible cases. Turn production incidents into durable automated coverage. Review generated code with suspicion for runtime edges, not just syntax and style.

If you do this well, debugging becomes less of a late-stage scramble and more of a continuous system for learning where your product actually breaks.

That is the shift worth making now: stop treating reliability as something inferred from isolated checks, and start treating it as something demonstrated by users successfully completing real workflows.